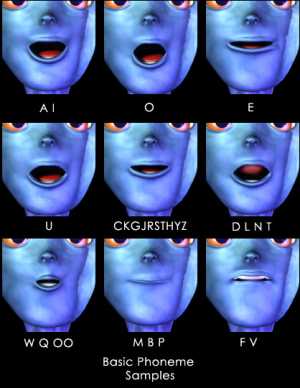

Hi guys, hope you had a good Easter. Mine was pretty cool, as usual trying to do family stuff and my own Antics stuff. There's not enough hours in the day, even when you get 4 days off! Anyway, I discovered something today you might be interested in. Recently I posted about having bones drive the facial expression of characters. The theory is good and it's been used to great effect within Max by some clever fellows. In case you missed it, the expression of a character is achieved by having the mesh deformed in certain ways, just like our skin. When we smile the corners of the mouth are raised, so if you raise the mesh in that region you get a smile from your character. Now, if your'e in a 3d app that gives you control over the mesh (the vertex's) then all is as easy as pie. However within Antics we have no such tools. So the other way is to prebuild the smile and the no-smile meshs and then have the character "blend" from one to another. This is most certainly how the EMOTIONS feature is being implemented. So, today I took another look at the 3ds max example as supplied and realised that the example is showing us the way they have achieved a moving mouth effect. For those who don't have Max, the example consists of a "rest" mesh and then a bunch of identical mesh's where the mouth is slightly adjusted to look like it would if it said a range of (phoneme) sounds. When the character is commanded to "say" an audio clip, then the blend shapes are used. Sooooooooooooo I thought, rather than adjust the mouth, what if the geometry was adjusted in other ways. (like a smile). Then instead of a moving mouth we'd get some other stuff.....The result was quite good. The character would blend to the new shapes even whilst walking, looking and playing animations!. On the downside, the character will return to the rest pose once the event is complete. Still it means you can use a sound file to drive a specific set of poses, in any version. I'll keep you posted. It would be great to see if a continuous tone keeps the pose steady. So then we could run say 400hz for sad and 1khz for happy, etc.

Chow.